Authors:

- Viraj Patel | Director of Mechanical Engineering

- Jarrett Mui, PE, RCDD, CPP, CTS, CDT | Director of Technology

- Leo Maya, PE, LEED AP BD+C | Principal

The rapid evolution of the digital economy, catalyzed by the explosive growth of artificial intelligence and the decentralization of cloud services, has fundamentally restructured the requirements for mission-critical infrastructure. In the Western United States, particularly within high-growth corridors such as Phoenix, Hillsboro, and Salt Lake City, data center development is shifting from isolated server buildings to complex, campus-scale digital cities.

This transition demands a sophisticated understanding of how building systems work together. Extreme power density, cooling physics, network diversity, and multi-layered redundancy must be coordinated from the earliest design phases. At Design West Engineering, we see this convergence across every discipline we touch: mechanical, electrical, plumbing, fire protection, technology, and commissioning. We are positioning our integrated practice to serve this rapidly expanding market.

Data Center Architectures and Business Models

The modern data center industry is no longer a single building type. It encompasses diverse operational models and financial structures that influence everything from site selection to capital expenditure. Understanding these distinctions is essential for architects shaping the built environment around mission-critical facilities.

Hyperscale Infrastructure

Purpose-built for single massive tenants such as AWS, Microsoft Azure, or Google Cloud, these campuses span 50 to 200+ MW of IT load and require 50 to 200+ acres of land. Operating under build-to-suit models with 10- to 20-year leases, developers often invest $1 to $2 billion per campus. Hyperscale economics prioritize long-term Total Cost of Ownership (TCO) and aggressive Power Usage Effectiveness (PUE) targets.

Colocation Facilities

These shared environments lease space to dozens or hundreds of enterprise tenants, typically ranging from 5 to 50 MW. Located in urban or near-urban settings, colocation requires flexible infrastructure capable of managing mixed rack densities and diverse Service Level Agreements (SLAs). The architectural challenge is accommodating tenants with vastly different cooling, power, and connectivity requirements within a common building envelope.

The AI Factory

A specialized and rapidly growing subset, AI factories prioritize massive compute density and GPU-to-GPU interconnectedness. These facilities target 100+ MW specifically to support training and inference workloads for large language models, requiring a fundamental redesign of thermal, electrical, and structural infrastructure. For architects, the implications are significant: larger chiller yards, heavier floor loads, and substantially greater site footprints compared to traditional data centers.

Storage and Archive Facilities

Optimized for long-term data retention rather than compute performance, storage data centers prioritize high capacity, low cost per terabyte, and data durability. These facilities operate at dramatically lower power densities, typically 2 to 5 kW per rack for disk-based storage and even less for tape libraries, and rely on simpler cooling strategies such as fresh-air economization or basic precision air conditioning. Redundancy strategies often emphasize erasure coding and geographic data replication across facilities rather than full on-site N+1 infrastructure. For design teams, the key difference is floor area: storage facilities require significantly more square footage per MW of IT load than compute-focused data centers, with different structural, power distribution, and fire suppression considerations.

Edge Data Centers

Smaller facilities, typically under 20 MW, placed near end users to reduce latency for real-time applications such as autonomous vehicles and augmented reality. Edge sites present unique design challenges: compact footprints, limited redundancy space, and heightened acoustic and architectural integration requirements. These facilities must blend into urban or suburban contexts in ways that larger campuses do not.

The AI Transformation: High-Density Compute and Its Impact on Buildings

The arrival of high-density AI accelerators has shattered traditional data center design assumptions. While a standard enterprise rack might consume 5 to 15 kW, next-generation AI systems demand an entirely different level of building infrastructure.

The pace of change is staggering. The NVIDIA GB300 NVL72, the current-generation rack-scale system shipping since late 2025, integrates 72 GPUs with 288 GB of high-bandwidth memory per GPU and draws 132 to 142 kW per rack, weighing approximately 3,000 pounds. The next-generation NVIDIA Vera Rubin NVL72, now in production and expected to ship in the second half of 2026, will push to an estimated 190 to 230 kW per rack with 100% liquid cooling and a fully fanless design. Looking further ahead, the Rubin Ultra platform targets approximately 600 kW across a multi-rack domain by 2027. For design teams, this means the building must deliver more power, remove more heat, and support more weight per square foot than anything built before.

Structural Loading and Floor Construction

An important clarification for architects and structural engineers: not all data centers use raised floors, and the industry is moving decisively away from them in high-density AI environments. While raised floors remain common in colocation and legacy enterprise facilities, where the underfloor air plenum historically served as the primary cooling distribution pathway, new-build AI and HPC data centers overwhelmingly favor reinforced slab-on-grade construction.

Three factors drive this shift. First, weight: a fully loaded GPU rack concentrates 3,000 pounds in under 7 square feet, creating point loads nearly double the capacity of standard raised floor panels. Flooded Coolant Distribution Units can reach 6,600 pounds. Second, liquid cooling eliminates the underfloor plenum’s purpose entirely; once rack densities exceed 30 to 40 kW, air cannot transfer heat fast enough, and modern AI racks use 70 to 90% liquid cooling. Third, slab-on-grade construction is less expensive, provides superior seismic performance in Western U.S. seismic zones, and simplifies equipment anchoring.

Purpose-built AI data halls use reinforced concrete slabs rated for 250 to 350+ PSF, well above the ASCE 7-22 code minimum of 100 PSF for access floors. TIA-942-C specifies a minimum of 150 lbf/ft² and recommends 250 lbf/ft² for Rated-3 and Rated-4 facilities. The emerging best practice is a hybrid campus approach: slab-on-grade in AI and GPU halls, with raised floors optional in traditional IT areas and administrative buildings.

The Cooling Revolution: From Air to Liquid

Traditional air cooling is physically insufficient at AI-scale densities. Liquids conduct heat 1,000 to 3,000 times more efficiently than air, which is why AI facilities now require liquid cooling as a physical necessity rather than an optional upgrade.

Key Cooling Crossover Thresholds

The modern liquid cooling ecosystem comprises five primary technologies, each suited to different density ranges and facility constraints:

Direct-to-Chip Cooling (DTC)

The dominant cooling technology for AI and HPC as of 2026. Micro-channel copper cold plates mount directly onto GPUs and CPUs, with coolant flowing through rack manifolds to a Coolant Distribution Unit (CDU). DTC removes 70 to 80% of rack heat directly at the source through liquid. The remaining heat from memory, drives, and power distribution still requires supplemental air cooling. Systems like the GB300 NVL72 rely on integrated direct liquid cooling that captures up to 98% of the equipment’s heat. A CDU is required.

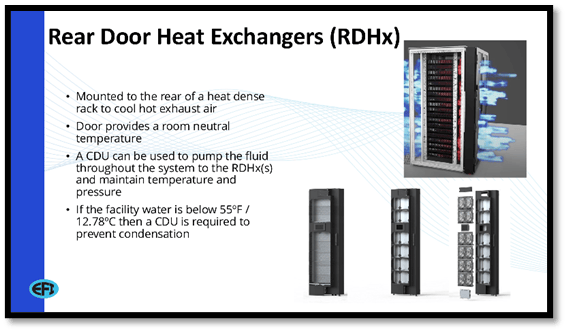

Rear Door Heat Exchangers (RDHx)

A liquid-to-air heat exchanger panel mounted to the rear of a heat-dense rack, cooling hot exhaust air before it enters the room and providing room-neutral discharge temperature. RDHx units connect to facility chilled water. If the supply water temperature is below 55°F (12.8°C), a CDU is required to prevent condensation. RDHx is an excellent retrofit solution for existing air-cooled facilities transitioning to higher densities.

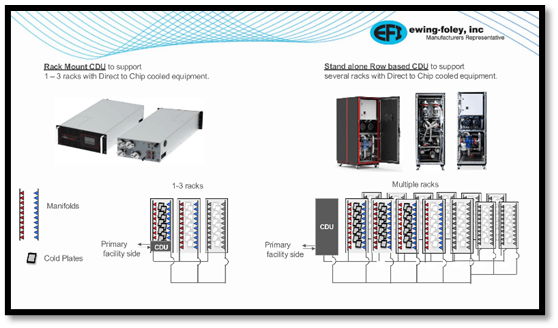

Coolant Distribution Units (CDUs)

Often described as the hydraulic heart of a liquid-cooled data center, CDUs circulate coolant (water or water/glycol) through cold plates, RDHx units, and in-row coolers while maintaining precise temperature, flow, and pressure control. Critically, CDUs isolate the IT cooling loop from the facility water system through an internal plate heat exchanger, protecting sensitive electronics from contaminants in the building water. CDUs are available in rack-mount configurations supporting 1 to 3 racks, and standalone row-based units supporting multiple racks per CDU.

In-Row Coolers (IRCs)

Rack-height units installed between server racks that draw hot air from the hot aisle, cool it through a liquid-cooled coil, and release cold air into the cold aisle. IRCs require hot/cold aisle containment and function as localized precision cooling targeted directly at the heat source. The heated coolant returns to a CDU or chiller for recirculation.

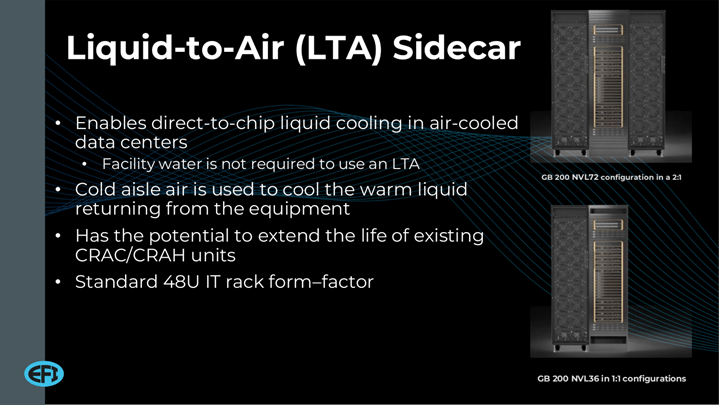

Liquid-to-Air (LTA) Sidecars

A critical bridging technology for existing air-cooled data centers. LTA sidecars are self-contained units adjacent to liquid-cooled racks that use cold aisle air to cool warm liquid returning from direct-to-chip equipment. No facility water connection is required. This enables liquid cooling deployment in legacy facilities without plumbing infrastructure changes, extending the useful life of existing CRAC/CRAH units. Standard 48U rack form-factor.

Immersion Cooling

Submerges entire servers in a non-conductive dielectric fluid, eliminating the need for internal server fans and enabling extreme densities of 50 to 250+ kW per rack. Single-phase immersion systems achieve PUE ratings as low as 1.02 to 1.10. Specialized CDUs compatible with dielectric fluids are required.

The 800V DC Power Evolution

As AI racks push toward megawatt-scale power demands, traditional low-voltage AC distribution becomes physically impractical. The industry is responding with 800V Direct Current (DC) architectures that eliminate multiple AC-to-DC conversion stages, reducing power losses, heat generation, and copper requirements.

At NVIDIA GTC 2026 in March, Vertiv, Texas Instruments, STMicroelectronics, and Infineon showcased production-ready 800V DC power delivery ecosystems. Industry data indicates 800V DC distribution can reduce copper requirements by 45%, improve efficiency by 5%, and lower total cost of ownership by 30% at gigawatt-scale facilities. For design teams, this transition means rethinking power distribution from the medium-voltage switchgear through the rack, with new safety and coordination requirements across electrical and technology disciplines.

Mission-Critical Redundancy: Engineering Uptime

Uptime is the fundamental currency of data center design. Downtime can cost operators millions of dollars per minute, which is why facilities must integrate specific levels of redundancy across electrical, mechanical, and telecommunications systems to eliminate single points of failure.

Redundancy applies across all building systems: electrical (UPS, generators, switchgear, distribution paths), mechanical (chillers, pumps, CDUs, piping loops), and telecommunications (carrier routes, fiber paths, meet-me rooms). The MEP engineer’s role is to ensure these redundant paths are truly independent, with physical separation and no shared single points of failure in routing, structural support, or controls.

Supporting infrastructure such as Catcher UPS systems and Static Transfer Switches (STS) enable instantaneous failover in under 4 to 8 milliseconds, maintaining seamless operations during fault events or planned maintenance.

Network Diversity and Carrier Neutrality

In the era of AI training clusters and high-frequency trading, network resiliency is as critical as power. True redundancy means complete physical separation from end to end. Purchasing circuits from two different carriers provides no protection if both fiber lines share the same underground conduit. Physical path diversity requires that redundant telecommunications routes share no common conduit, manhole, bridge crossing, or any other single point of failure along their entire length. This principle fails in practice far more often than operators realize. Secondary providers frequently lease last-mile transport from the incumbent carrier, creating “false diversity”: two separate invoices for cables running through the same underground conduit. A single excavation accident takes down both.

Per TIA-942-C, Rated-3 (Concurrently Maintainable) facilities require at least two physically separated pathways for backbone and entrance cabling, entering from opposite building sides and traversing independent fire zones. Rated-4 (Fault Tolerant) mandates dual independent active paths with full physical isolation. Diverse building entry points must be spaced at least 20 meters (66 feet) apart. Best practice calls for dual meet-me rooms (MMRs) to avoid a single room becoming a single point of failure. For design teams, this means early coordination of conduit routing, penetration locations, fire-rated pathway separations, and technology room cooling. At DWE, our Technology discipline works in parallel with mechanical and electrical from day one, not as an afterthought.

Campus-Level Master Planning

Data centers are no longer single buildings. Modern campuses spanning hundreds of acres function as self-sustaining ecosystems. For architects, this means master planning a coordinated set of building types, each with distinct performance requirements:

- Data Halls: the core mission-critical compute environments

- Dedicated Substations: brought to the front of the schedule due to long lead times for transformers

- Central Utility Plants: chiller yards, cooling towers, CDU arrays, and thermal energy storage

- Administrative and NOC Buildings: Network Operations Center for monitoring network traffic, server health, and application performance

- Security Operations Center (SOC): a dedicated, 24/7-staffed command room for monitoring and responding to all physical security systems, including video surveillance, perimeter intrusion detection, access control, and alarm management

- Warehousing and Logistics: staging and unboxing of delicate IT hardware

- Security Portals and Guardhouses: layered authentication, video surveillance, perimeter intrusion detection systems, and vehicle barriers

- Cold Storage and Archive Buildings: high-capacity, low-power-density environments for long-term data retention

- Workforce Housing: often necessary in remote markets to house specialized construction teams

Multi-phase construction is the norm. Phase One establishes the infrastructure backbone (underground networks of power, water, and fiber), intentionally over-engineered so Phase Two and Three data halls can be constructed concurrently while active environments remain fully operational. Every building type on the campus carries its own MEP, technology, and security requirements, and the coordination burden across disciplines is significant.

Navigating Constraints in the Western U.S.

The Water-Energy Nexus

In the arid West, Water Usage Effectiveness (WUE) has become a board-level metric alongside PUE. The tradeoff is real: reducing water consumption through air-based or closed-loop cooling often increases electrical load. Optimizing WUE cannot be done in isolation; it must be coordinated with PUE, electrical capacity, utility tariffs, and carbon goals. Water-smart design strategies that DWE engineers evaluate with clients include:

- Hybrid and air-based cooling leveraging outside air, high-efficiency heat exchangers, and advanced containment to minimize evaporative water use

- Closed-loop and indirect evaporative cooling (IDEC) systems that eliminate continuous potable water consumption

- Raised operating temperature setpoints backed by robust controls, since modern IT equipment tolerates higher supply air temperatures than legacy designs assumed

- Reclaimed and non-potable water sources for cooling towers and landscaping, with on-site treatment to maximize reuse cycles

- Real-time water metering integrated into the BMS, with trend logs and alarms to flag abnormal usage, hidden leaks, and poorly tuned setpoints

Nevada has already banned evaporative cooling in all new data center developments. California Assembly Bill 93, if passed, would mandate energy and water use reporting for data center operators. The regulatory trajectory is clear, and proactive design is the best risk mitigation available.

Behind-the-Meter Power Generation

Grid interconnection delays now stretch to five or more years in many Western U.S. jurisdictions, with the U.S. interconnection queue exceeding 2,600 GW and project withdrawal rates approaching 80%. Developers are increasingly turning to on-site, behind-the-meter generation: natural gas aeroderivative turbines, Battery Energy Storage Systems (BESS), on-site solar, and fuel cells provide bridge power while long-term grid connections are secured. Small Modular Reactors (SMRs) are being explored for future baseload supply.

For design teams, this means planning facilities that can operate in islanded mode, transition between power sources seamlessly, and scale generation capacity as the campus grows. The site plan must accommodate not just the data halls, but the fuel storage, emissions controls, and utility interconnection infrastructure that behind-the-meter generation requires.

Regulatory and Community Engagement

As data center energy consumption grows (projected to reach 6 to 8% of total U.S. electricity by 2030), municipalities are pushing back with stricter zoning, noise ordinances, and water-use conditions. Understanding property rights, navigating municipal mandates, and proactively engaging communities with transparent sustainability data are non-negotiable for successful entitlements. Architects play a central role in this conversation, shaping the community-facing narrative through building massing, screening strategies, landscaping, and acoustic design.

How Design West Engineering Supports Data Center Development

Design West Engineering is a mission-critical-focused firm where MEP, Fire Alarm, and Fire Protection design are the core of our practice. What distinguishes our approach is integration: our Technology discipline (rack layouts, structured cabling, network rooms, security systems, and audiovisual systems) is designed in parallel with mechanical, electrical, and plumbing systems, not as a separate scope layered in after the fact.

Our Commissioning Authority (CxA) team acts as an independent advocate during construction and startup, verifying that systems are installed per design intent, perform under real-world conditions, and meet the stringent efficiency and reliability standards these facilities demand. Beyond commissioning, we also support ongoing facility operations, helping operators optimize system performance, troubleshoot issues, and plan phased upgrades that maintain uptime while improving WUE, PUE, and overall resilience over time. For data center clients, we provide:

- Holistic water and energy strategy development, defining target WUE and PUE ranges by region and project phase

- Scenario-based cooling concept development with first-cost and life-cycle cost comparisons, utility impacts, and architectural implications

- Detailed MEP design including piping, ductwork, equipment selections, and control sequences integrated with advanced metering and BMS logic

- Technology systems design (rack layouts, structured cabling, network rooms, security, and access control) coordinated with MEP from schematic design through commissioning

- Existing facility assessments and operational support with phased upgrade roadmaps that maintain uptime while improving performance

- Dry utility coordination and multi-phase campus infrastructure planning

We serve data centers, edge facilities, and mission-critical environments across the Western U.S., including Southern California, the Pacific Northwest, Arizona, Nevada, and beyond.

Designing for What’s Next

Designing next-generation data centers in the Western U.S. is no longer merely a real estate or IT exercise. It is a multi-disciplinary systems challenge requiring the coordinated expertise of mechanical, electrical, plumbing, technology, fire protection, and commissioning professionals, all working from a shared understanding of the physics, the economics, and the regulatory landscape.

By mastering the nuances of capital-intensive business models, embracing liquid-cooled AI architectures, engineering robust redundant systems, managing water and energy as interconnected resources, and deploying strategic multi-phase construction, development teams can build dynamic digital cities that are secure, scalable, and future-proof.

If you are a developer, facility director, planner, or architect working on data center projects in the Western U.S., Design West Engineering would welcome a conversation. Connect with our team at designwesteng.com or call (909) 890-3700.